Over the past 12 months much has changed for us here at Fathom, not only have we added two new members to the team, but we’ve also taken on a new name!

We began the year as SSBN and finished it as Fathom. Alongside this name-change came some fantastic new research that has significantly improved flood-modeling capabilities and thus improved the services and products that we offer to our clients.

Our progress over the past 12 months is in no small part down to the efforts of our new team members Dr Niall Quinn and Dr Ollie Wing, who joined us early in 2017 from the University of Bristol. It has proven exciting and productive to bring onboard motivated and capable young scientists and we are all excited about what 2018 will bring.

Research highlights of 2017

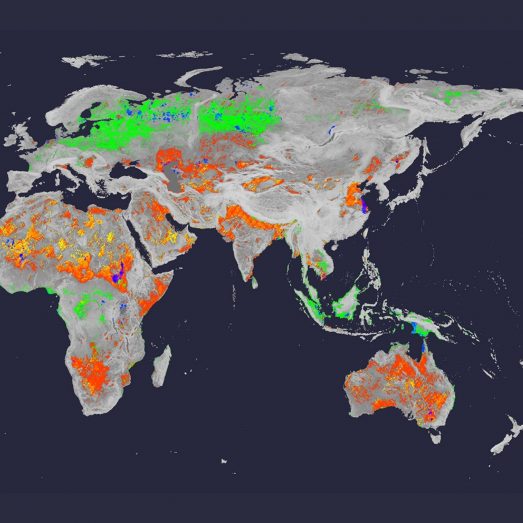

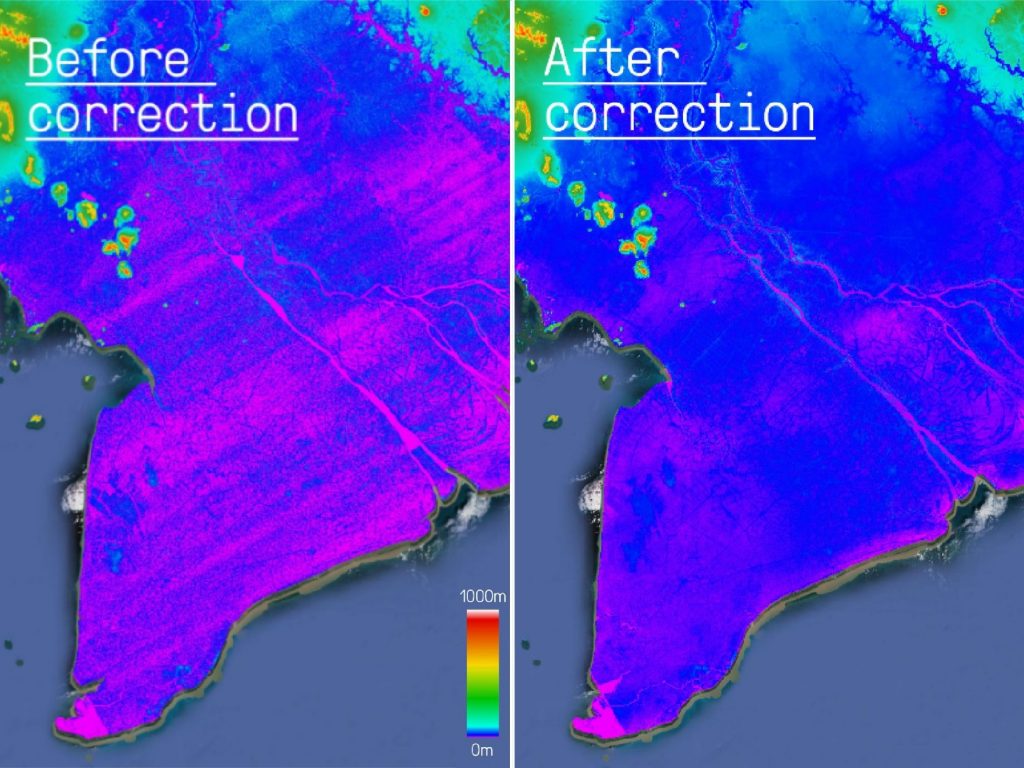

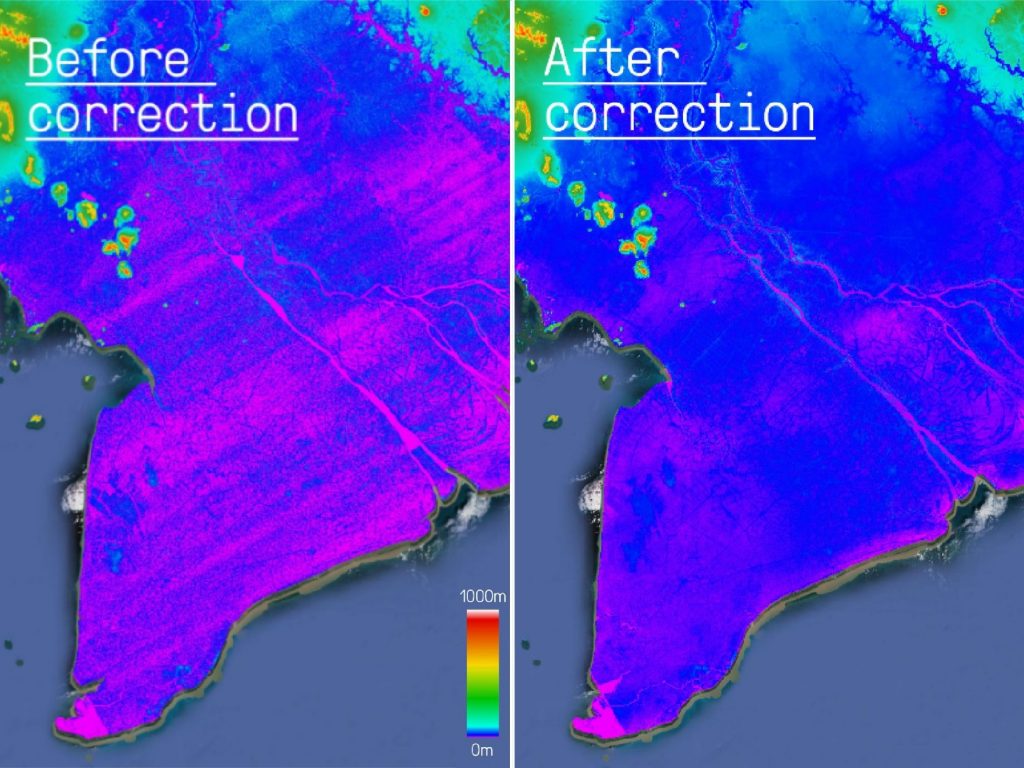

One of the research highlights of 2017 for us was the release of a new global terrain dataset, or Digital Elevation Model (DEM), called the ‘Multi Error Removed Improved Terrain’ (MERIT) DEM. When building flood models in data poor regions (which is in fact most of the Earth’s land surface), one of the biggest challenges is dealing with uncertain or erroneous terrain data.

If you are building a flood model and your map of the Earth’s surface is incorrect, then it is difficult to build an accurate flood model, after all, water flows downhill! The release of MERIT DEM constitutes a major improvement in global flood model capability. The work, led by a colleague in Japan, has significantly reduced the vertical errors present in the previous global DEM. Therefore, we were thrilled to be able to run the next generation of our Global Data on this new DEM.

Hurricane Harvey event response

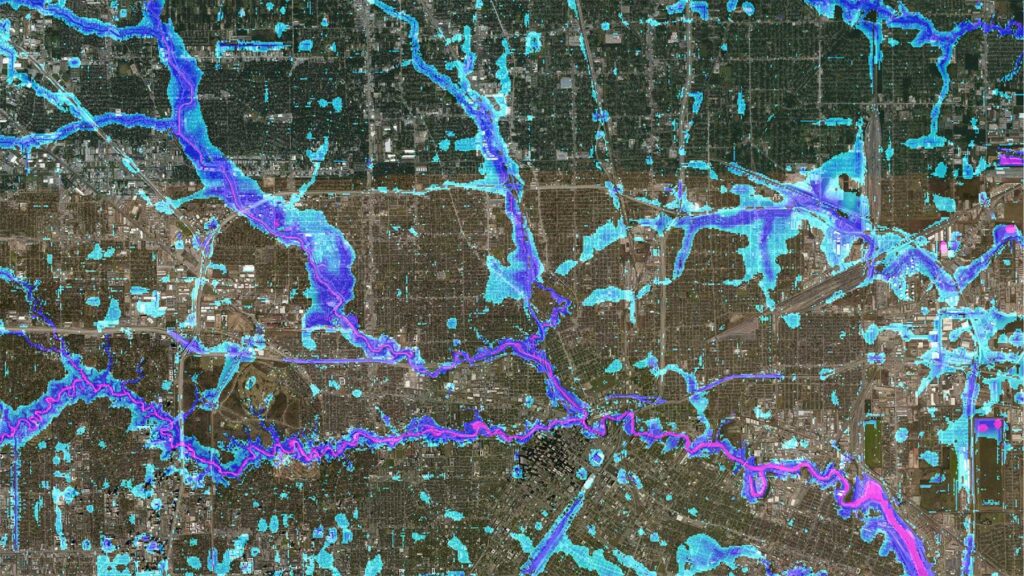

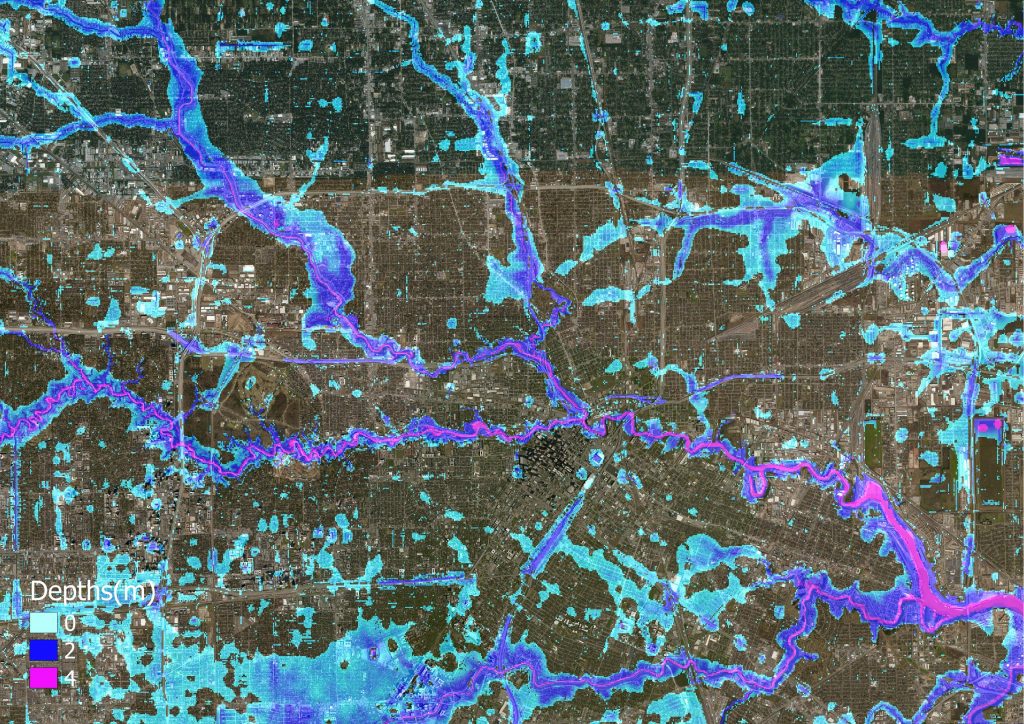

The 2017 hurricane season proved to be one of the most active since records began, with 17 named storms including Harvey, Irma and Maria. Back in August, we began to get warnings from colleagues in the US that Hurricane Harvey may be about to release unprecedented amounts of rainfall on the city of Houston, Texas.

In response we decided to undertake some event simulations to see if we could produce some data that would be useful to responders. We were delighted to see our data picked up by multiple users, including NASA, who used them to produce potential loss estimates across the city. Subsequent validation work demonstrated hit rates of between 60-84% across different validation data. Although areas for improvement were identified, the work has demonstrated that skilful near-real time inundation data are now a reality.

Participating in a UN meeting

In November, we were invited to participate at an expert meeting at the United Nations, in Geneva. In total, over 150 experts attended the meeting and it proved to be an interesting and productive few days (you can read my thoughts on the meeting here). One of the key messages to emerge from the meeting was that there is much to be learnt from the insurance world, in terms of how they manage risk in mainly developed countries. The reason that these advances have been made is simply owing to capital that is exposed in richer economies.

If these methods are to be replicated across developing regions, then more public-sector investment seems crucial. Alongside other organisations, we are demonstrating that skilful models and data can be produced even in the most data sparse regions. This meeting proved a highlight for us as it further demonstrated that the demand for better tools and data is growing. Hopefully this requirement will be met with the improved investment in disaster mitigation tools that is clearly needed.

AGU – An overview of our 2017 research highlights

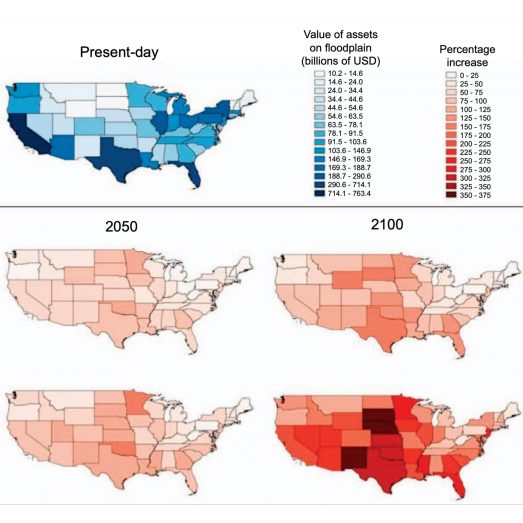

Just last month we attended the Annual Fall Meeting of the American Geophysical Union, the largest preeminent Earth and space science meeting in the world. The work we presented there provides a nice overview of the research we have undertaken over the past 12 months. Amongst this work was a piece of research lead by Dr Ollie Wing, exploring exposure to flood risk across the United States. The presentation of this work was subsequently picked up by numerous news outlets, including the BBC.

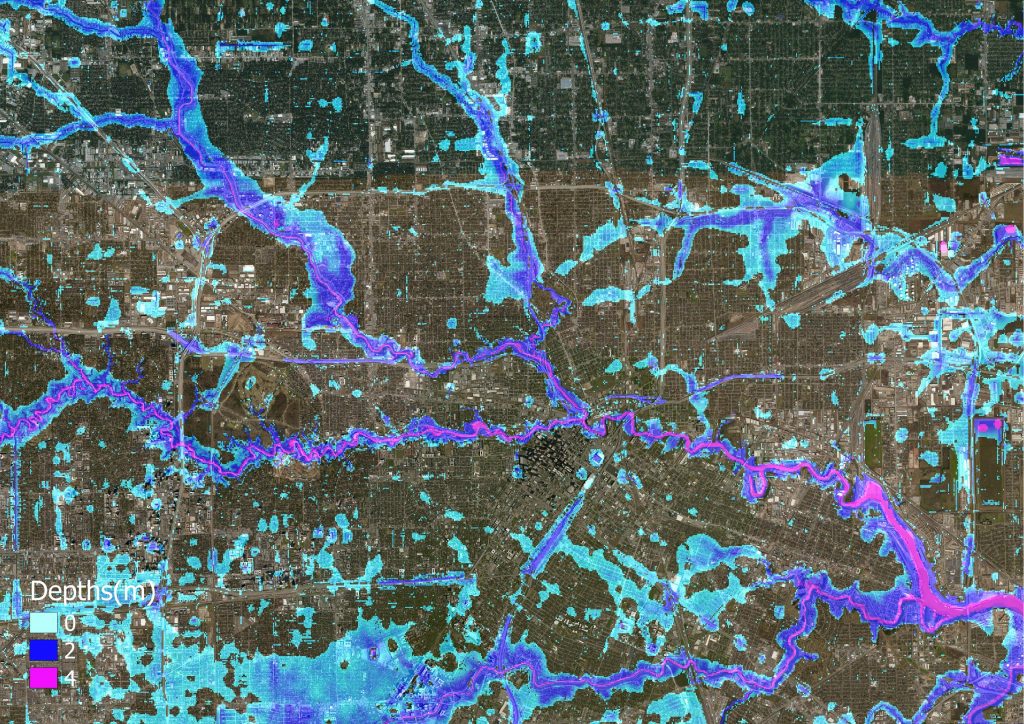

Validating our US Flood Model

Alongside the presentation of US flood analytics, we also presented our work validating our US flood model. This work, published earlier in 2017, represents the first time that a large-scale flood hazard model has been validated. As a company, we have always considered it necessary to validate our models as much as possible to ensure that our data are used appropriately. We can of course expect model performance to vary across different surface geographies; validating our models at these scales allows us to inform our clients as to where they can expect the data to perform well and where they may breakdown.

Future US stochastic flood model launch

In 2018, we will be launching our first US stochastic flood model, a product that has already supported by a number of insurance companies. The methodology that sits behind the event-set generation was presented at AGU by Niall Quinn and, in the spirit of open and transparent data, will be published in a top academic journal. Again, focussed on the US simulating event footprints during the 2017 hurricane season. As already mentioned, these model simulations represent new capabilities in near real-time event response.

Analysing flood risk for developing countries

Outside of our work in the United States, we also presented work analysing flood risk across 18 developing countries using a new exposure data set produced by the Connectivity Lab at Facebook. The emergence of these new data, using new technology to map populations, is allowing us to conduct flood risk analysis with unprecedented accuracy. Finally, Dr Jeff Neal presented new methods that are being developed to estimate river depths, one of the currently unmeasurable components of a large-scale flood hazard model.

What’s to come in 2018

With so many highlights of 2017, we’re already looking forward to 2018 – there is much to be excited about. Over the coming months we will be releasing our new stochastic US flood hazard model, in collaboration with OASIS-LMF. Significant progress is also being made in the development of new US flood defence data, which we are confident will improve our US model further.

Once our US stochastic model has been deployed we will be looking to transfer these methods globally, to transform our current world leading flood hazard data into flood catastrophe models, deployable almost anywhere in the world. Moreover, the release of NASADEM, a new global terrain dataset, will undoubtedly improve our global capabilities further.

We remain hopeful that the US agency will release these data at some point in 2018. Outside of these goals, we are also engaging in numerous scientific collaborations that will undoubtedly yield unforeseen and significant progress in our field. Here’s to a productive 2018!